What is AI literacy?

Now you will know.

There are lots of people talking about something called “AI literacy”. I get the feeling that a lot of people are trying to define “AI Literacy” in the academic space because this will get them citations (not sorry if this is you). Other people are arguing that ai literacy is not even an important thing to define. I really agree with the conclusion here:

we should focus on what makes us uniquely valuable as embodied, social, and organic creatures who are compelled to be with, empathise with, learn from, and think with one another. Our ability to navigate human networks and our role within them is our superpower.

I was talking with someone last night who studies computer science education about what AI literacy is. Neither of us seem to know either. But over the course of the discussion we arrived at a definition that could probably remove the AI part and simply be associated with some part of problem solving. Below I will describe what I will define as “AI literacy”.

AI Literacy according to john

I have tried to embrace lots of AI tools as they come out and integrate them into my research. This includes both using tools like AI coding agents but also developing and training neural networks for a variety of tasks generally related to one of my many current focuses (foci?) which is rock-fluid-climate interaction. Feel free to read more about that here. Across all this effort I have realized that the level of abstraction is much more important than before. What do I mean by “level of abstraction”?

Sometimes I want to build a data dashboard. For example I had some plan to work with a colleague on a large set of time series data. I thought it silly to mess around in a Jupyter notebook because it’s somewhat unwieldy when you want to look at ten different time series at once. So I texted my ai agent on telegram what I wanted and pointed them at the repo with the data and they did exactly what I wanted without further interaction with me. That was fantastic. It would have taken me a week to make that dashboard.

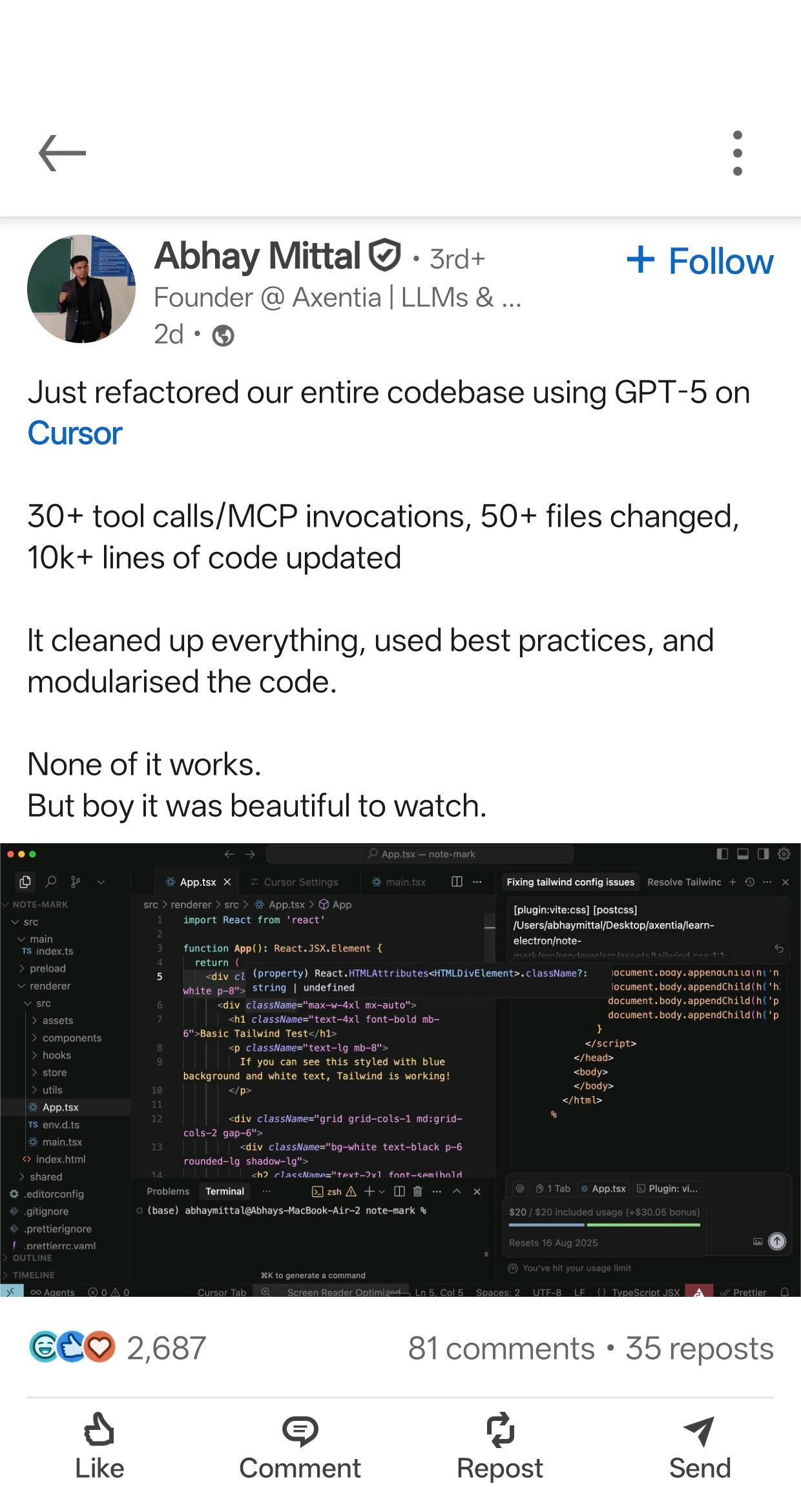

Other times I want to train a neural network on some data to build a classifier, do some kind of prediction, or whatever. I need to know a lot of specifics about the architecture and how that architecture changes as I explore what fits the data and solves the problem the best. If I asked my ai agent to do this nothing will work. And I know this because I tried. It was me literally experiencing this meme:

I had to spend an afternoon undoing the mess created by me letting an AI agent loose on my codebase.

And the difference between those two experiences exactly highlights what “AI literacy” could be described as. There is a spectrum of context that you should consider when using an AI tool. You should be constantly assessing if you are operating at the correct abstraction layer to solve the problem at hand. And that abstraction layer acts as a sort of tuning knob or hyper parameter of how involved you are in what the computer is doing. AI literacy is understanding how much you need to understand about what the computer is doing in order to assess whether it did what you wanted or not.

Steve Jobs and Apple liked to say when they built the personal computer they built a bicycle for your mind. You can watch him say it in the video above. I really buy into this concept and think it’s not just marketing hype. Computers are tools that can help us conceptualize problems beyond what we can do with only ourselves. This is all AI tools are doing. They abstract away parts of the problem space so we can focus on understanding the important part. Understanding what needs to be abstracted at the current problem solving step is AI literacy.